Note: This is a somewhat technical post

While writing my previous JMP paper, On elemental and configural models of associative learning, I was also working out how the equivalence between elemental and configural models could be exploited for better analytical methods. My rationale for this research was that, in most cases, associative learning models are studied either intuitively or with computer simulation, making it difficult to establish general claims rigorously. After some time and fantastic input from reviewers and the editor, I am happy that Studying associative learning without solving learning equations came finally out over the Summer. This paper shows that the predictions of many models can be calculated analytically simply by solving systems of linear equations, which is much easier than trying to solve the models’ learning equations. For example, we can calculate that, in a simple summation experiment (training an associative strength  to stimulus

to stimulus  and

and  to

to  ) the associative strength for the compound

) the associative strength for the compound  is, in the Rescorla & Wagner (1972) model:

is, in the Rescorla & Wagner (1972) model:

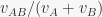

and in Pearce’s (1987) model:

where, in both cases,  is the proportion of stimulus elements in common between A and B. This makes it immediately apparent that

is the proportion of stimulus elements in common between A and B. This makes it immediately apparent that  in Rescorla & Wagner (1972) ranges between 1/2 and 1, while in Pearce (1987) it ranges between 1/2 and 0.54. This results were previously known only in the special case

in Rescorla & Wagner (1972) ranges between 1/2 and 1, while in Pearce (1987) it ranges between 1/2 and 0.54. This results were previously known only in the special case  .

.

I hope the method presented in the paper will be used also by others to derive new theoretical predictions and design new theory driven experiments!